Managing Editor’s Note: Next Wednesday at 2 p.m. ET, Jeff is hosting an important AI Doomsday event…

As this year’s market volatility rages on, Jeff says we’re only getting started in what he believes will be an extended period of disruption during which AI is going to hurt more businesses.

Fortunately, he has a strategy in place to help profit from the losers in this disruption window. You can go here to sign up with one click to hear all the details from Jeff on April 8…

Just click here to automatically add your name to the attendees’ list.

Anthropic’s Multi-Billion Mistake

Founder and CEO

Something unthinkable happened in high tech in the last 24 hours.

It’s an event so significant… it would typically result in job losses, the loss of future funding, and the loss of the company’s competitive advantage.

It might even reshape the entire industry the company operates in.

Two days ago, Anthropic – one of the world’s leading frontier AI model developers – released an update (version 2.1.88) of its Claude Code software.

Claude Code is widely regarded as the highest-performing AI coding model on the market today.

Claude Code is also the AI behind the runaway adoption of OpenClaw, the open-source agentic AI phenomenon (recently acquired by OpenAI) that we last explored in The Bleeding Edge – Agentic AIs Need Social Interactions Too.

Updating software frequently is something every software company does.

But this release was different.

The Update Heard Around the World

Quickly after Anthropic released the update, a security researcher discovered that the software package included a source map file…

And that file held access to all of Claude Code’s source code… all 512,000 lines of code.

For free. Open for anyone, anywhere to access. Just sitting right there.

Absolute disaster.

And it’s so much worse.

Rather than alerting Anthropic, the researcher who discovered the mistake posted on X… and included a link to the source code.

What happened next is an absolute nightmare for Anthropic.

The source code was uploaded into a public GitHub repository…

Where it became the fastest software repository to reach 50,000 forks (basically a copy of the source code).

And it all happened within a matter of hours.

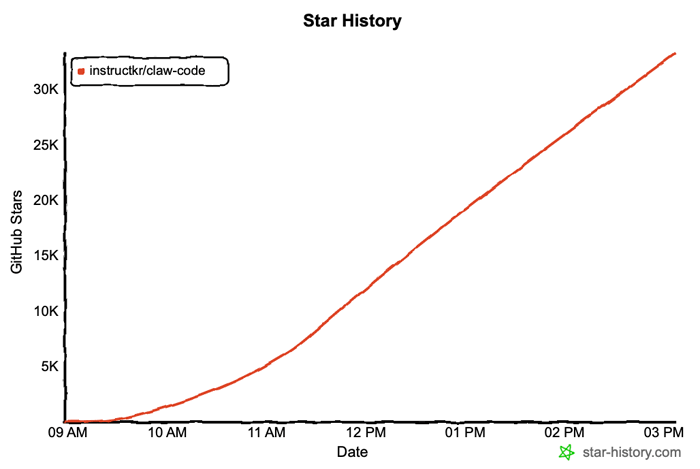

Claude Code’s Source Code Forks to 50,000

Source: instructkr/claw-code

At the time of this writing, there are already about 91,900 copies of Claude Code’s source code in the wild.

And with every refresh of the GitHub page, the number continues to increase.

How could this have happened?

It wasn’t malicious or intentional.

A single individual in the company ran a production build of Claude Code in preparation for publishing the update.

The software compiler used generated a .map file, which can reverse the code base back to its source code.

And then the Anthropic employee published it all.

It was just a stupid mistake by a software engineer.

But for one of the world’s leading AI companies – now worth $380 billion – it’s a reckless, gaping hole revealing the company’s extremely weak security processes.

Which is ironic… since it’s coming from the company best known for “AI Safetyism.”

Anthropic’s CEO, Dario Amodei, is well-known for calling AI an existential threat and has declared Anthropic as the “most safety-focused lab on Earth.”

Elon Musk Set to Trigger Massive AI Crash in April?

This comingWednesday, at 2 p.m. ET, Jeff Brown is having a special online strategy session he’s calling AI Doomsday… To help you prepare for a massive new wave of disruption he sees coming. Elon Musk is about to release a new AI model so disruptive that it could trigger a fresh new wave of crashes in the stock market. Click here to save your seat… Because if you’re holding the wrong stocks, you could lose everything in the coming days.

(When you click the link, your email address will automatically be added to Jeff’s guest list.)

The REAL Winner From The SpaceX IPO?

While everyone’s waiting on the SpaceX ticker… Tech investing legend Jeff Brown has pinpointed a Musk-linked company that could see far more explosive growth. This “hidden supplier” to SpaceX is set to provide Musk with 5 billion chips over the next two years. And Brown says we’re just weeks away from witnessing a 10,587% growth explosion. Click Here for the Full Story Before April 24.

Supply Chain Risk

Even more ironic is that mere weeks ago, Anthropic and others ridiculed the Pentagon for blacklisting Anthropic’s AI from use, citing it as a supply chain risk for the government.

It is now crystal clear that it was a very smart move by the Pentagon.

And it’s also clear that the Pentagon knew a whole lot more about the inner workings of Anthropic and its software.

Just imagine the implications… if Anthropic were to accidentally release a custom fork of its frontier AI model, which had been designed for U.S. intelligence services and contained highly classified information.

It would be invaluable to U.S. adversaries and potentially devastating to U.S. national security.

But what about the implications for Anthropic, and for that matter, the AI industry at large?

After all, investors in Anthropic have given the company $61.15 billion to date – in the race to achieve artificial general intelligence (AGI).

Its last funding round was a $30.6 billion raise this February.

Anthropic has literally spent tens of billions of dollars developing its proprietary AI models.

And it just gave Claude Code away for free.

Can you imagine the phone calls Anthropic CEO Dario Amodei has been receiving since the release of its source code? Investors are not happy right now.

Of course, Anthropic has been in damage control mode since the release.

Since this morning, the company has issued more than 8,000 copyright takedown requests for the removal of the source code.

But do you really think that software developers in China, North Korea, Russia, etc. will adhere to U.S. copyright laws?

No way.

Anthropic has insisted that the source code release didn’t expose any customer-specific information, or even the weights of Anthropic’s AI models.

That’s probably true, but the reality is that the damage is done.

The source code is out there, and it continues to be copied and forked at a rapid pace.

It is out of Anthropic’s hands. The genie is out of the bottle. There’s no way to turn back time.

The source code literally reveals Anthropic’s software architecture, something that was once nearly impossible to reverse engineer.

It shows any software, company, or government precisely how Claude Code works – knowledge that came at a cost of billions of dollars of investment.

Now, any competitor, or any new startup, can leverage this knowledge to make competing products in a matter of months, which immediately devalues Anthropic’s software.

And if you’re an enterprise customer of Anthropic, how could you possibly trust the company with access to your proprietary and sensitive data?

The same is true for any government working with Anthropic.

In Development, Too!

To make matters worse, the software source code release also revealed new product features that Anthropic has in development.

One that particularly stands out is a multi-agent orchestration along the lines of what xAI has successfully implemented with Grok 4.2.

It is also developing new features around what it calls “Dream Memory Consolidation,” which is designed to defragment and consolidate long-term memory of the models.

This will enable an AI to have long-term, personalized memory of all interactions with an end user, company, or government.

And perhaps the oddest new feature in development is what Anthropic calls a “Tamagotchi-style pet companion,” with gacha mechanics, species, rarities, and ASCII art stats.

Aside from the gamification of having a digital pet to care for, the pet companion “sits beside your input box and reacts to your coding.” It’s like a little digital creature looking over your shoulder as you work, an intelligent one that you can interact with.

And at the center of it all is a new KAIROS feature that will become an always-on, 24/7agentic AI, capable of being productive when we play, relax, or sleep.

That’s what’s coming. And now we know this definitively, thanks to one massive, multibillion-dollar screw-up.

And it’s coming for all of the leading frontier AI models.

In a normal market environment, it would be hard for a company like Anthropic to recover from a massive mistake like this. But these are not normal times…

First, not all of Anthropic’s source code was released – just that related to Claude Code.

Second, Bleeding Edge readers can already grok the implications for the entire market, understanding now that one of the world’s leading source codes was just basically made open source to the world’s software engineers.

This will only act like fuel to an already frenzied race to AGI greatness… No need to invest billions to build a model. Just fork Anthropic’s source code, make some improvements, and you’ve got a state-of-the-art product.

Despite the massive flub, the value of any company that attains artificial general intelligence has the potential to be worth more than a trillion dollars.

Anthropic will get there, as will OpenAI. And I would argue xAI is already there in the laboratory… with what will become Grok 5.

Recent Articles

Blockchains to Be Hacked by Quantum Computers

Mar 31, 2026 • 7 min read

Mar 30, 2026 • 6 min read

Marketing Ploy or the Real Deal?

Mar 27, 2026 • 9 min read

1125 N Charles St, Baltimore, MD 21201

www.brownstoneresearch.com

To ensure our emails continue reaching your inbox, please add our email address to your address book.

This editorial email containing advertisements was sent to pahovis@aol.com because you subscribed to this service. To stop receiving these emails, click here.

Brownstone Research welcomes your feedback and questions. But please note: The law prohibits us from giving personalized advice.

To contact Customer Service, call toll free Domestic/International: 1-888-512-0726, Mon-Fri, 9am-7pm ET, or email us here.

© 2026 Brownstone Research. All rights reserved. Any reproduction, copying, or redistribution of our content, in whole or in part, is prohibited without written permission from Brownstone Research.