Managing Editor’s Note: Today, we’re handing the reins to our friend and colleague Jason Bodner.

Longtime Brownstone Research members may remember Jason as a regular contributor from years past. With a Wall Street resume that spans decades, Jason specializes in quantitative strategies that identify high upside, “outlier” stocks.

Today, he shows why the AI trade is becoming multi-faceted. The big question now isn’t how fast an AI can “think,” but how quickly it can “remember.”

Read on…

AI’s Biggest Bottleneck Is…

Jason Bodner

Contributing Editor, The Bleeding Edge

For the last two years, the AI story has been dominated by compute. Faster chips, bigger clusters, more GPUs.

That made sense. More intelligence requires more processing power. As Jeff has discussed in The Bleeding Edge before, AI compute is currently doubling roughly every six months – a pattern that is accelerating.

But something has changed. The focus is no longer solely on how fast AI can think. That problem is being addressed by photonics, fiber optics, and systems moving data near the speed of light.

The constraint today is different. We are now also limited by how fast AI can remember.

Intelligence Factories

AI systems don’t just compute. They constantly retrieve data. Every prompt, every model output, every training run depends on moving enormous amounts of information into the chip at the right time. That’s where things start to break.

Even the most advanced AI chips spend a meaningful amount of time idle, not because they lack power, but because they are waiting for data. That delay comes from memory, not compute.

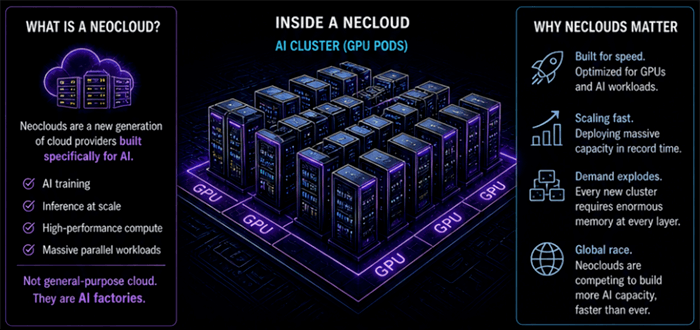

A major driver is the rise of neoclouds. These are a new generation of specialized cloud providers built specifically for AI workloads, compared to traditional hyperscaler cloud solutions. Instead of running general applications, they are building massive GPU clusters for training and inference.

Think of them as factories for intelligence. And these factories require memory at every layer – fast memory to feed the chip, working memory for active datasets, and large-scale storage for everything else.

As these systems scale, memory demand does not grow gradually. It accelerates.

Recommended Links

How a $900M “Market Wizard” Went 20 Years Without a Single Losing Year

Larry Benedict’s hedge fund, Banyan Capital, generated $274 million in verified profits for clients like JPMorgan, the Bank of New York, the Canadian government, and Saudi National Commercial Bank. His fund went 20 straight years without a single losing year. The kind of record that earned Larry his own chapter in one of the legendary “Market Wizards” book series alongside Paul Tudor Jones and Ray Dalio. Now, on Thursday, May 7, at 8 p.m. ET, Larry’s sharing a method for pocketing a year’s worth of S&P 500 gains in one day… over and over again, without buying, selling or holding a single stock. Free. Click here to automatically reserve your seat.

(When you click the link, your email address will be added to the event guest list.)

Millionaire Warns: “Move Your Money Now.”

Larry Benedict generated $274 million in profits for his clients by knowing where money flows when the Federal Reserve shifts. He says Trump’s Fed Takeover is triggering the most significant shift in U.S. markets in nearly 20 years. He’s already identified the one ticker he expects billions to flood into… and he’s giving away the name for free.

Click here to get the full details before the window closes.

The Memory Hierarchy

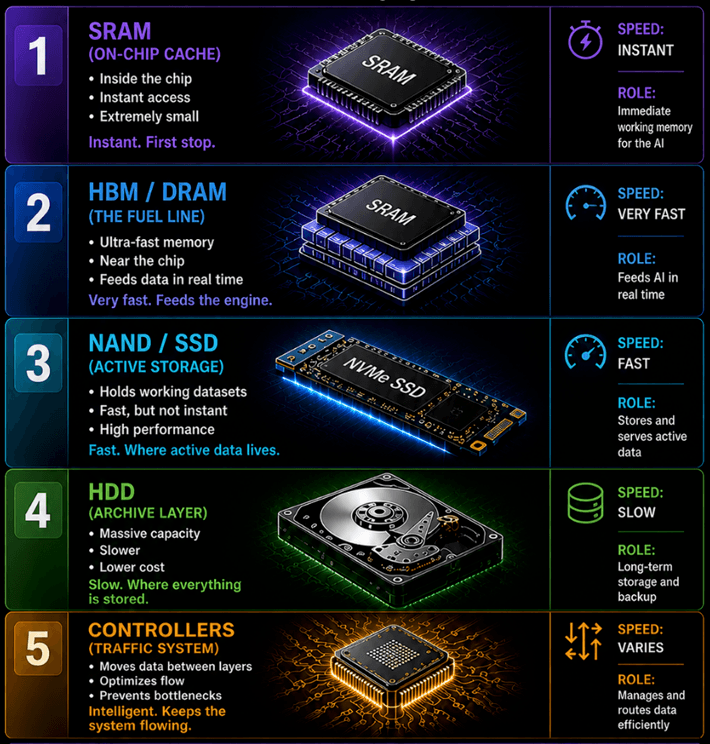

Memory is not one thing. It’s a hierarchy. At the top sits SRAM, or Static Random Access Memory. This is on-chip memory. It is effectively instant, but extremely small.

Next is DRAM and HBM. DRAM, or Dynamic Random Access Memory, sits close to the processor and delivers data quickly. HBM, or High Bandwidth Memory, goes further by stacking memory next to the chip to move large amounts of data at once.

Beyond that is NAND flash. This is non-volatile memory that retains data without power. SSDs use NAND to store active datasets and models. It is fast, but not immediate.

Further out are HDDs or Hard Disk Drives. These prioritize capacity over speed and store large volumes of data at lower cost.

Controllers manage how data moves between these layers, so the system does not stall.

Each layer has a role, and each runs at a different speed. The further the data is from the chip, the longer it takes to arrive. Data can now move at extraordinary speeds. The problem is getting it to the right place at the right time.

Memory does not deliver data in a steady stream. It arrives in bursts, at different speeds, from different layers. It resembles a workforce commuting from different parts of a city, all arriving at different times.

That is why performance does not scale perfectly. The chip is only as effective as the system feeding it.

The Memory Shock

Memory has always been cyclical.

Jeff Brown knows this better than anybody. Here’s how he described the phenomenon in the August 2020 edition of The Near Future Report:

Most forms of memory are considered to be commodities. If you can get the same density, processing rates, and power consumption, manufacturers typically go with the cheapest provider prices when supply is greater than demand.

When demand is high and supply is low, memory manufacturers enjoy high margins through higher pricing. The semiconductor industry then tends to overbuild production capacity, attracted by the high margin. Over time, supply exceeds demand, and margins drop.

That was true historically, but AI is introducing a new kind of memory demand shock. Building new capacity takes years and billions of dollars. Supply is concentrated among a small number of players. In memory, the big three are Micron (MU), Samsung, and SK Hynix.

Demand can surge within quarters. When that imbalance appears, prices do not gradually rise. They spike. We are already seeing it. DRAM prices rose approximately 50% in 2025. NAND pricing is recovering. Margins are expanding across the industry. Some executives are already signaling shortages extending years into the future.

Recent earnings tell the story.

When Micron reported Q2 earnings in March, the results were downright shocking. The company reported $12.20 earnings-per share (EPS). In Q2 of the previous year, that figure was $1.56. That’s nearly 7X growth… in one year.

Revenue is beating expectations, growth rates are accelerating, and guidance is aggressive and may still prove conservative. This is not a short-term bump. It is the early stage of a structural shift.

AI is increasing demand not just for compute, but for everything that supports it. Now, the focus is shifting to bottlenecks. And memory is one of the most critical.

Regards,

Jason Bodner

Contributing Editor, The Bleeding Edge

P.S. Hi, Jeff’s managing editor here.

We hope you enjoyed this special issue from our friend and colleague, Jason Bodner. You’ll be hearing more from him soon, so be sure to stay tuned.

As a note, we also want to remind readers that our colleague over at The Opportunistic Trader, Larry Benedict, is broadcasting a special event this Thursday.

Markets have been volatile this year. It’s the sort of environment traders like Larry thrive in.

That’s why he’s unveiling his one-ticker strategy that gives people a shot at more gains in a single day than the S&P 500 typically delivers in an entire year, over and over again, without ever buying, selling, or holding a single stock.

You can go here to sign up with one click to join him for this strategy session on Thursday at 8 p.m. ET.

Recent Articles

May 4, 2026 • 5 min read

It’s Full Steam Ahead for the Hyperscalers

May 1, 2026 • 10 min read

Apr 30, 2026 • 5 min read

1125 N Charles St, Baltimore, MD 21201

www.brownstoneresearch.com

To ensure our emails continue reaching your inbox, please add our email address to your address book.

This editorial email containing advertisements was sent to pahovis@aol.com because you subscribed to this service. To stop receiving these emails, click here.

Brownstone Research welcomes your feedback and questions. But please note: The law prohibits us from giving personalized advice.

To contact Customer Service, call toll free Domestic/International: 1-888-512-0726, Mon-Fri, 9am-7pm ET, or email us here.

© 2026 Brownstone Research. All rights reserved. Any reproduction, copying, or redistribution of our content, in whole or in part, is prohibited without written permission from Brownstone Research.